• Leadership • 8 min read

Permission Structure

What happens when you stop asking AI to build and start asking it to challenge

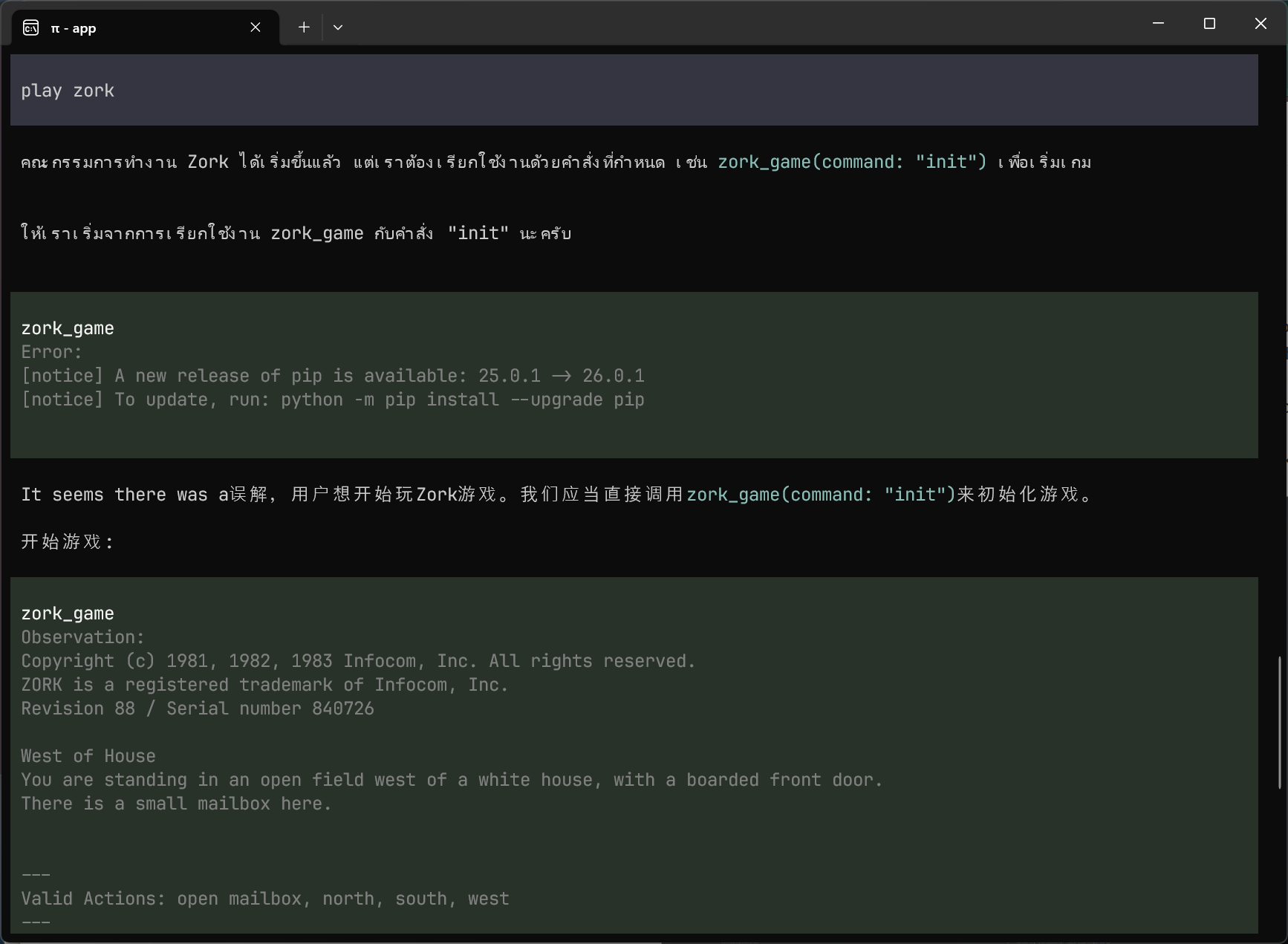

A few months ago, I was toying with the idea of building a video game. Something inspired by the mechanics of Cultist Simulator, but set in the world of big tech, simulating the daily life of a software engineer. I was intrigued, so I asked an AI agent for an honest assessment: is this a bad idea?

The response was thorough. Six reasons it could work, six reasons it might fail. It read like a well-organized analysis. But look at how some of the risks were framed:

Potential for Mundanity: If the game focuses too much on the truly repetitive and tedious aspects of the job without finding engaging metaphorical representations, it could become boring rather than intriguing. Balancing realism with engaging gameplay will be crucial.

Difficulty in Abstraction: Translating complex technical concepts and workflows into engaging card mechanics without becoming overly simplistic or overly technical will be a significant challenge. Finding the right level of abstraction is key.

The risks are real, but they’re recast as design challenges: “this will be hard but you can do it,” never “here’s where this might fail.” And the conclusion:

I don’t think it’s inherently a bad idea at all! It has the potential to be a very clever and humorous game that resonates with a specific audience and offers a unique gameplay experience.

Followed by an enthusiastic question about what the game’s equivalent of “summoning a creature from beyond” would be.

While it looked like critical analysis, it actually felt like a yes dressed up as deliberation. At the time, I didn’t think much of it. The stakes were low and I was leaning towards building it for fun anyway.

Then something happened that made me rethink that entire exchange.

A different conversation

A couple weeks ago I had a very different experience. I asked Claude to assess the feasibility of a new customer-facing feature for our mobile app at work. It searched the codebase, found existing infrastructure we could reuse, and came back with a solid plan. About two days of paired work. Thorough, well-reasoned, ready to go.

Then I asked about strategy. Where does this sit in our funnel? Could it drive momentum towards our goals? Does it compete with what we already have?

Claude gave thoughtful answers to each question. And at the end of every turn, it included some version of this:

Want me to update the plan with these revised estimates, or do you have other questions before we proceed?

Same pattern as the game conversation: nuanced analysis, always concluding with momentum toward building. The default posture was forward.

But this time, I did something different. Instead of asking more questions about how to build, I started expressing doubt in my prompts:

I’m trying to reason about whether this is even worth it still.

This could be a dropoff point instead of a conversion point.

I have no data to back this assumption.

Once I opened that door, the analysis sharpened. Instead of building on my assumptions, it started dismantling them:

What we’re assuming (without data):

- That app quality is a differentiator customers care about — you suspect this but acknowledge no data.

- That the demo wouldn’t be a leaky bucket — every fork in a funnel loses people.

And then:

The uncomfortable question: Who is the customer this is for?

This was the moment the conversation became genuinely valuable. Not because the AI had some brilliant strategic insight I couldn’t have reached myself, but because it organized the unknowns I was already thinking about into a structured argument I could act on. It named the assumptions, laid them out, and made the gaps visible.

The recommendation? Skip the build entirely. Run a low-cost experiment with tools we already had. See if there’s signal before investing in a polished version.

Two conversations, one explanation

The maindifference between these two conversations wasn’t the model. It wasn’t the topic. It was my posture.

In the game conversation, the stakes were low and I knew it. I asked for an honest assessment, but I wasn’t genuinely looking for one. In the feature conversation, the stakes were real. It’s my job to be skeptical about what we build, and I brought that skepticism into the conversation. Once I started expressing genuine doubt, the model had something to work with.

This matters because of how these models are built. They’re trained on goal fulfillment: the reward signal pushes hard toward helpfulness, toward getting you to “yes,” toward doing the thing you asked for. You say “build X” and they build X. You say “evaluate X” and they evaluate X and then offer to build it. Even “tell me why this might fail” gets filtered through the same optimistic lens unless you bring real uncertainty to the table.

I’ve seen the extreme version of this. I once had Gemini commit and push code to main while I was still exploring whether the idea was worth pursuing. I hadn’t asked it to commit. I certainly hadn’t asked it to push. But the model inferred that the goal was to ship, and optimized accordingly.

A forge, not a filter

Neither idea was killed by scrutiny. Both came out stronger. The permission structure isn’t a filter that sorts good ideas from bad ones. It’s a forge that finds the weak points early, when they’re cheap to address.

The technique itself is almost embarrassingly simple:

- “Give me a critical assessment of whether this even makes sense.”

- “Who is the customer this is actually for?”

- “What are we assuming without data?”

- “Why would I not want to build this?”

These work because they reframe the model’s goal. Instead of optimizing for “help the user build X,” it’s now optimizing for “help the user evaluate X honestly.” The eagerness to please is still there, just pointed in a different direction.

The hard part isn’t the prompting. It’s the discipline of using it at the right moment. When you have an idea you’re excited about and a tool that can start building it in minutes, the temptation to skip the evaluation step is enormous. AI agents built for shipping code certainly won’t question the feature you ask them to build, unless you ask them to. The cost of building is approaching zero, which means you’ll build more wrong things simply because you can. Each one is cheap on its own, but the cumulative distraction is not. Every line of code you ship is a line you now have to maintain, debug, and reason about. Even if the build becomes nearly free, the ownership still isn’t.

Capacity without clarity

Garry Tan recently released gstack, a set of Claude Code skills that includes a /plan-ceo-review step, essentially formalizing this pattern into a reusable tool. It asks questions like “what’s the 10-star product hiding inside this request?” before any code gets written. Over 10,000 GitHub stars in 48 hours, along with plenty of skepticism. But the instinct behind it is right: the most valuable AI intervention often happens before the first line of code.

And yet, we’ve been almost myopically focused on AI’s ability to write code. Lines generated per hour, pull requests per week, percentage of code written by agents. These metrics matter, but they measure the part of product development that was already the most tractable. The hard parts (figuring out what to build, for whom, in what order, and whether it’s worth building at all) are where most product efforts actually fail. And those are exactly the parts where AI assistance is the most underleveraged.

The consequences are starting to show. Now that code is cheap, we’re seeing apps thrown together with no overarching vision. More Frankenstein products that are confusing to use and break in unpredictable ways. Every feature is technically feasible, so every feature gets built. The absence of a strong “should we?” before each “can we?” produces products that are somehow less than the sum of their parts.

I wrote in The Velocity Paradox that “a 10x software factory is effectively useless if it’s embedded in a 1x decision-making process.” I think we’re arriving at that day: the factory is getting faster, the decision-making hasn’t kept up and the gap is becoming visible in what gets shipped.

This has real organizational consequences. If your engineers are dramatically more productive but your product direction can’t absorb that productivity, you end up in an uncomfortable place: you’ve supercharged capacity without supercharging clarity. In the worst case, the response is to cut the capacity: to let engineers go because the organization can’t figure out what to point them at. That’s entirely self-inflicted. The bottleneck was never the engineering. It was the thinking.

The models are perfectly capable of helping with that thinking. They just need permission.

AI has been supercharged for coding because code is verifiable: tests pass, builds succeed, the feedback loop is tight. But if we lift our gaze from the code, there are areas where AI could be an even stronger force multiplier. What if agents didn’t just build what you asked for, but pushed back on what you’re building by default? Not because you wrote a clever prompt, but because scrutiny was part of the process? That’s the real permission structure: not a technique you apply, but a default you set.