• Coding • 7 min read

Visualizing Ukkonen's Suffix Tree Algorithm

What if you could watch data structures being built?

Learning algorithms from books

I learned most of what I know about algorithms by poring over a copy of Introduction to Algorithms I got while in university. The book is very well known, especially among folks who got a formal education in computer science.

I worked through large sections of it, pen in hand, trying to trace through increasingly complex algorithms, building intuition for their behavior and tradeoffs. The book covers the theory in great depth: correctness proofs, recurrence relations, asymptotic analysis.

But there was often a gap between reading an algorithm and truly understanding it. The book would present pseudocode, sometimes a few diagrams showing state at key moments and theorems about performance characteristics. The work of tracing what actually happens was left as an exercise to the reader. I did that work with pen and paper, drawing trees, crossing out nodes, scribbling indices in the margins. It worked, eventually. But it was slow, error-prone, and the understanding felt fragile.

Implementing from a paper

Years later, I ran into this gap again. I was working on a programming puzzle that required near-instant substring search over a large dataset. After some research, I settled on a Generalized Suffix Tree: a data structure that indexes all suffixes of a set of strings, enabling lookups where is the length of the search pattern, even over an extremely large corpus.

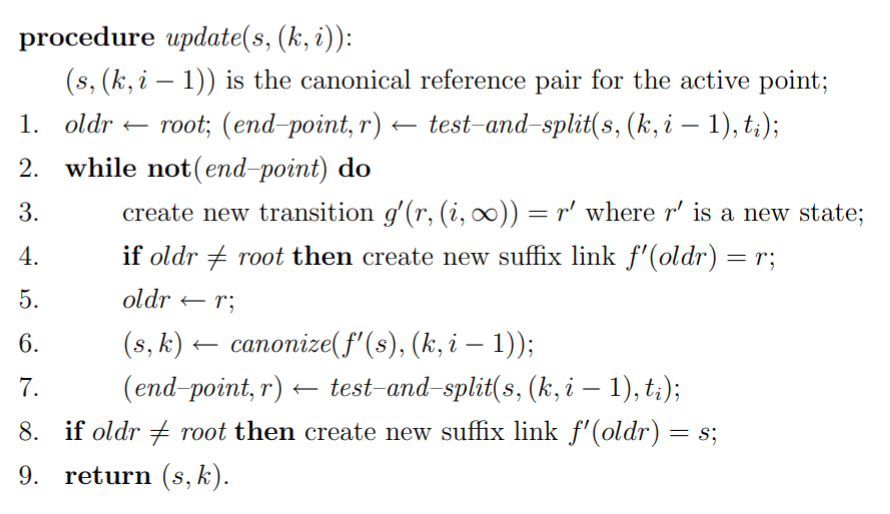

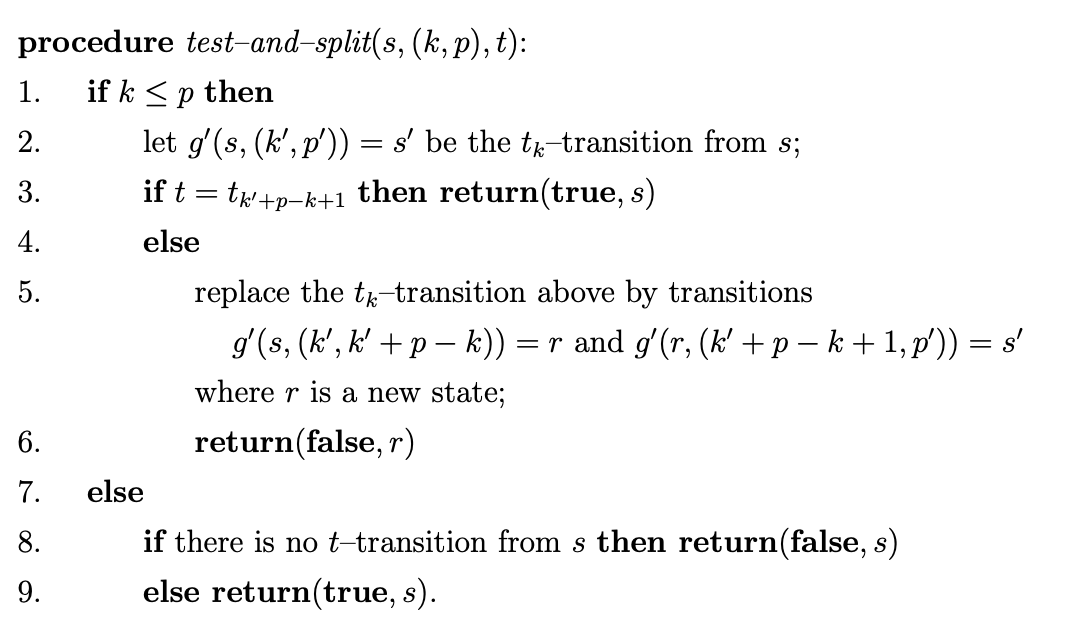

The algorithm I chose for building the tree was Ukkonen’s, described in a 1995 paper. The paper is well written and includes the full algorithm in pseudocode:

It took me a few hours to get right. Not because the pseudocode was wrong: it was precise and correct. The difficulty was that the algorithm manipulates a tree in non-obvious ways. There is an “active point” that walks around the tree. Suffix links connect internal nodes as shortcuts. Three different extension rules fire depending on what is already in the tree and what is being added. The pseudocode tells you what to do, but building an intuition for why it works requires watching it happen.

I did what I always did: I sketched trees by hand. I traced the algorithm on the string cacao, then on banana, drawing and redrawing nodes and edges as each character was processed. When my Java implementation finally produced correct results, I was relieved, but my understanding of the algorithm still felt like it had been assembled from fragments.

The biggest frustration was that I had no way to inspect what my code was actually building. I relied on the usual bag of tricks: print statements, breakpoints, inspecting memory structures one by one in a debugger. But that is like understanding a forest by looking at one tree at a time. What I wanted was to see the whole data structure after each operation — to watch the algorithm work.

The visualization I wish I had

That idea stuck with me: build the algorithm in a language where rendering the data structure is easy, then step through the construction visually. JavaScript and D3.js are a natural fit: the algorithm produces a tree, and D3 is very good at drawing trees.

So here it is. The visualization below builds a suffix tree for the string banana using Ukkonen’s algorithm, step by step. Use the playback controls to move through the construction. The gold-highlighted node is the active point. Dashed arcs are suffix links.

Press play to watch the tree being built

Add a string to begin building the suffix tree.

The paper describes the core logic across Sections 2–4. Here is test_and_split, the procedure that decides whether the tree needs to grow, which is a companion to the update function we showed earlier:

A few things to watch for in the visualization — each one corresponds to something in this procedure:

- Branching in

update: whentest_and_splitfinds no existing transition for the next character, it splits the edge if needed andupdatecreates a new leaf. These are the moments where the tree visibly grows. - Reaching the end point: when

test_and_splitfinds that a transition for the next character already exists, the algorithm has reached what the paper calls the end point of the current phase. All remaining suffixes are already represented implicitly, so the phase stops. This is the key to the algorithm’s time: the end point can only move forward through the string across phases, bounding the total work. - Suffix links (the paper’s suffix function ): if an internal node has path-label , its suffix link points to the node with path-label . The

updateprocedure follows these links to jump to the next insertion point instead of walking from the root every time. - Finally, the ”$” terminator converts an implicit suffix tree, where some suffixes may end mid-edge, into an explicit one where every suffix terminates at a distinct leaf.

Adding more strings

A generalized suffix tree indexes multiple strings. Each string is added with its own terminator, and the tree grows incrementally. Below, panama is added after banana. Step through and notice how much of the tree structure already exists from the first string.

Press play to watch the tree being built

Add a string to begin building the suffix tree.

Searching

Once the tree is built, searching for a pattern means matching characters along edges from the root. The visualization below has both strings pre-loaded. Try searching for ana, then try pan, ban, xyz.

Press play to watch the tree being built

Add a string to begin building the suffix tree.

Try it yourself

An empty tree, yours to experiment with. Add strings, watch the construction, search for patterns. Use the scroll wheel to zoom and click-drag to pan if the tree gets large.

Press play to watch the tree being built

Add a string to begin building the suffix tree.

Beyond suffix trees

What excites me most is how well this generalizes. The gap between an algorithm on paper and an algorithm in memory has always been one of the hardest parts of learning computer science. Textbooks give you static diagrams. Debuggers give you one node at a time. Neither shows you the whole picture in motion.

Browser-based rendering, interactive SVGs, and JavaScript engines fast enough to run non-trivial algorithms client-side make it possible to close that gap for almost any data structure. Red-black trees, B-trees, tries, skip lists, hash tables with open addressing: all of them would benefit from this kind of treatment. Not as a replacement for the theory, but as a companion to it. Read the algorithm, then watch it work.

There is an obvious question lurking here: why bother learning algorithms at all when you can ask an LLM to write one for you? I think the question misses the more interesting possibility. LLMs are not just code generators; they are learning accelerators. You can ask one to explain a single step of an algorithm, to walk through an edge case, or to generate a diagram of how components interact. When I started working in a new codebase recently, the fastest way for me to build a mental model was not reading code or documentation. It was asking an LLM to produce component and sequence diagrams: a much higher-bandwidth channel for understanding, at least for the way I think.

That is the real shift. Not that machines can write algorithms so we don’t have to learn them, but that they can teach us in ways that adapt to how each of us actually learns. Through visualizations, through diagrams, through conversation, through whatever representation makes the concept click. This post is one example. The next one might look completely different, tailored to a different person and a different way of thinking.

We write fewer algorithms from scratch in our day-to-day work than we used to. But we still benefit from understanding them, whether it’s to choose the right data structure, to debug performance issues, or to evaluate tradeoffs. And for those of us who enjoy algorithms for their own sake, the tools for learning them have never been better.

The original Java suffix tree implementation is open source on GitHub. For the full backstory, see the project page and the story of the programming puzzle that started it all. Ukkonen’s original paper remains the definitive reference for the algorithm.