• Leadership • 5 min read

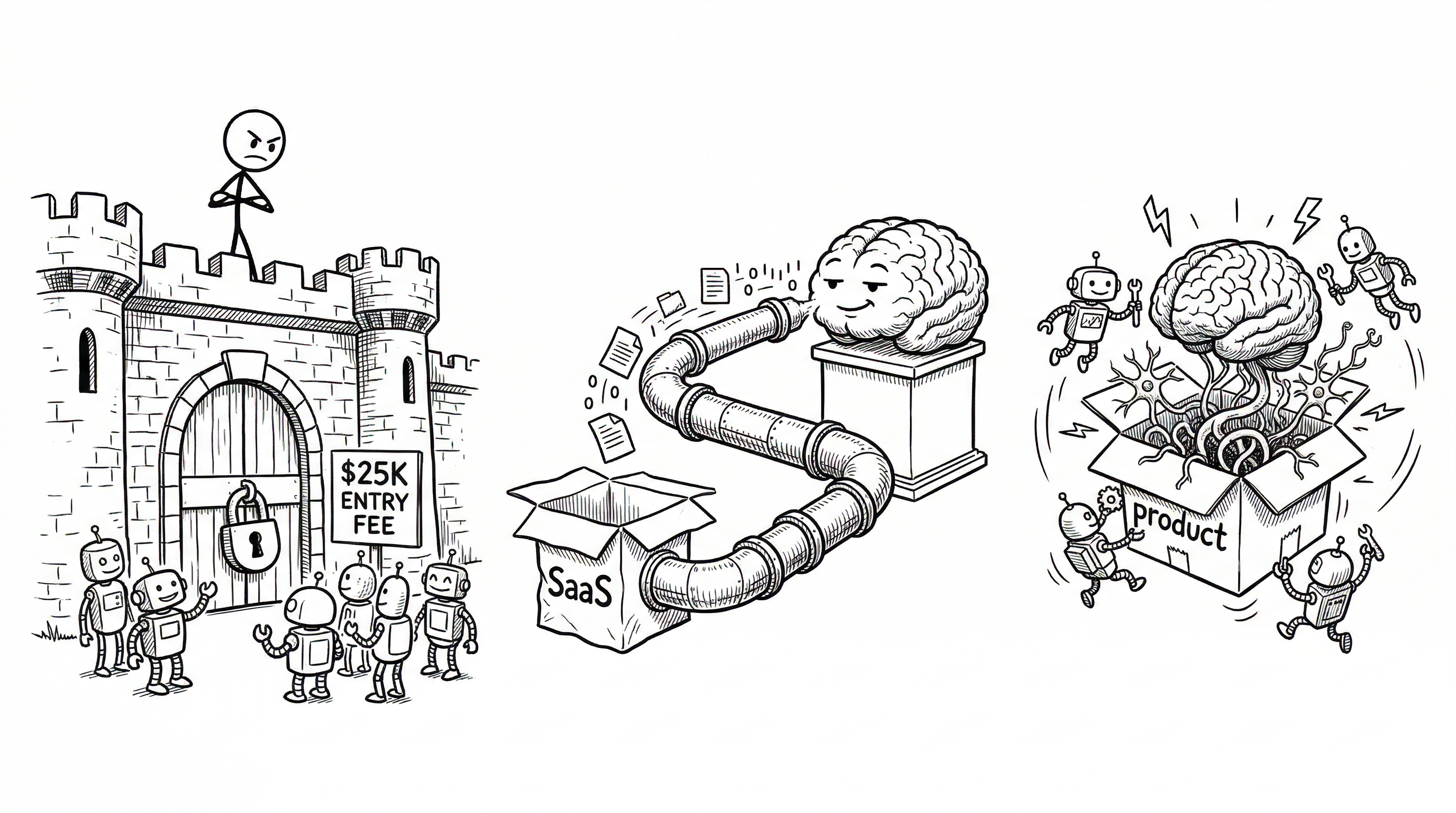

Fortresses, Pipes, and Brains

Three ways SaaS companies are responding to AI. Only one makes the product smarter

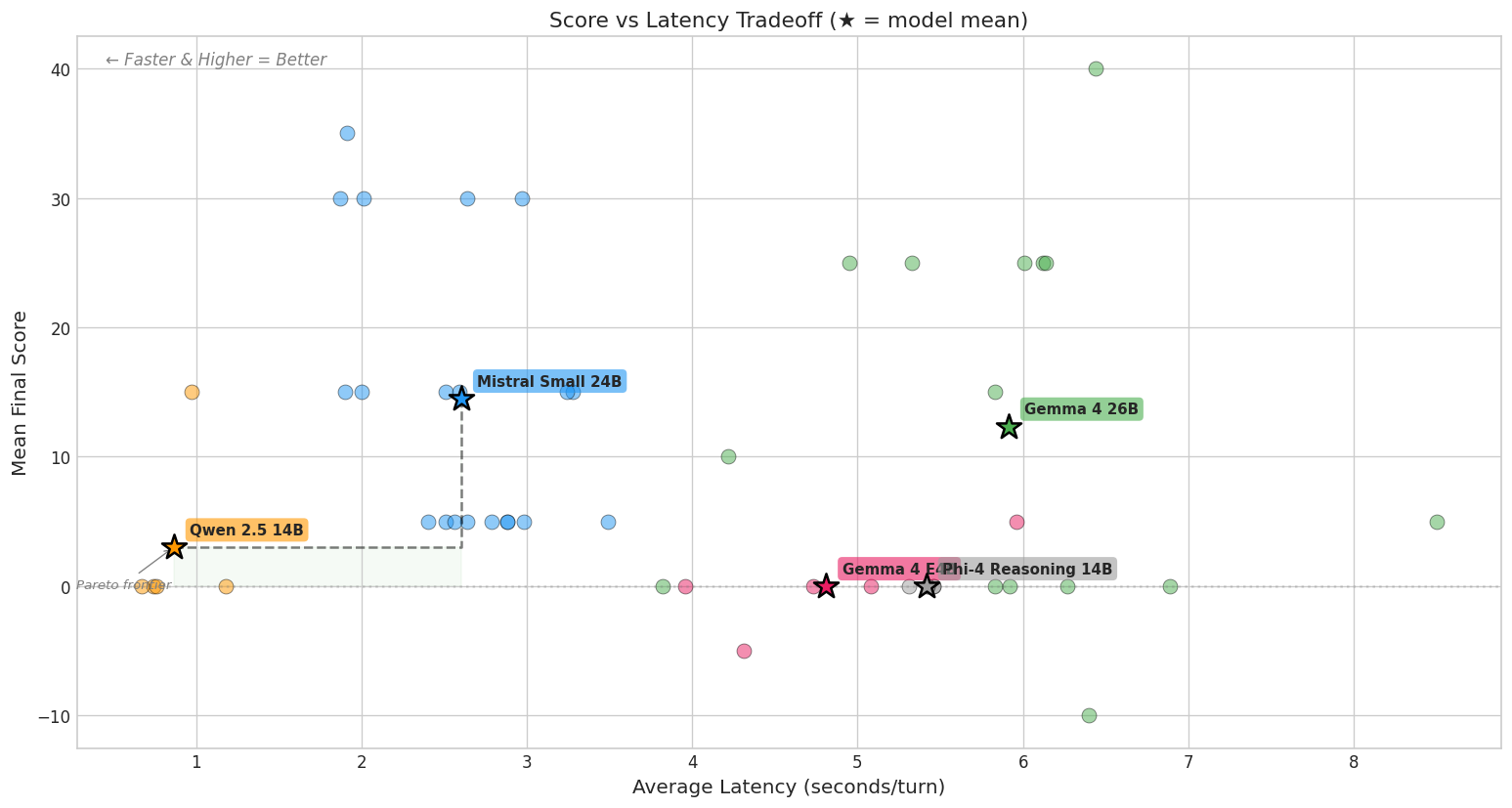

A few weeks ago, Workday’s CEO called AI agent startups “parasites” on an earnings call. Around the same time, Linear shipped an AI agent built directly into their product. Two very different answers to the same question: what happens when AI wants access to your product’s data?

I’ve been thinking about this a lot, partly because I’ve spent the last few months on the other side of that question: building my own AI-powered workflows on top of Linear using Claude and MCP. Triage automation, status synthesis, issue creation from Slack threads. It worked well enough. But looking back, I was essentially treating Linear as a database and doing all the reasoning somewhere else.

That experience, and this contrast between Workday and Linear, crystallized a pattern I think is worth naming.

Three responses to the same moment

Every SaaS company is facing the same pressure right now: AI agents want to interact with your product’s data. The responses I’m seeing fall into three categories.

The Fortress

Lock the data down. Charge $25,000 for data exports. Call anyone who builds on top of your APIs a parasite. This is Workday’s approach: treating data access as a zero-sum game where every external agent is a threat to the business model.

It’s a defensive posture that works for Workday specifically because their moat isn’t just the data: it’s the business logic, compliance rules, and domain expertise embedded in the product. Their customers aren’t going to replicate that in a prompt. But for companies whose moat is primarily data lock-in, this bet tends to age poorly.

The Pipe

This is where most of the industry is right now. You ship an MCP server or an API, and external AI agents pull your data out to reason about it elsewhere. The product becomes a data store. The intelligence lives in the chat agent, the coding assistant, the orchestration layer, anywhere but inside the product itself.

This was exactly my setup. I had Claude connected to Linear via MCP, and I built workflows that synthesized project context, triaged incoming issues, and generated status updates. The reasoning happened in Claude. Linear was the pipe.

It worked. But there was a ceiling. Every workflow I built required me to explicitly model what context to extract, how to reason about it, and what to push back. I was reconstructing, outside Linear, domain knowledge that Linear already had. The pipe pattern means the product doesn’t get smarter. It just gets read from.

The Brain

Linear’s approach is different. They ship MCP too, and you can still pipe data out to external agents. But they also ship a native agent that is opinionated about the process of building software in teams. It doesn’t just retrieve your issues on request. It triages. It synthesizes customer requests across projects. It catches risks. It drafts issues from meeting notes.

That’s not data extraction. That’s domain intelligence, running where the context is richest.

The difference is subtle but structural. An external agent reasoning about your Linear data is working with a limited snapshot: whatever it pulled through the pipe. A native agent has access to the full graph of relationships, the history of how work flows through your team, the patterns in how issues get triaged and resolved. It can be opinionated about the process, not just the data.

There’s a reason this matters more than it might seem. A lot of the current momentum in AI-assisted development is about keeping specs and context in the repo, which works well for the atomic coding loop: one developer, one feature branch. But the context that matters for team-level decisions (triage patterns, customer signal aggregation, cross-project dependencies, the messy handoffs between deciding what to build and how to build it) doesn’t live in the repo. It lives in the project management layer. That’s exactly the context a native agent can leverage, and an external one piping data out never fully sees.

The uncomfortable middle

Most SaaS companies today are in the pipe position, whether they intended to be or not. They shipped an API or an MCP endpoint, and the AI ecosystem is using them as data sources for external reasoning. The product itself isn’t getting smarter. It’s becoming infrastructure.

That’s not necessarily a bad position. Infrastructure is valuable. But it’s a different business from what most SaaS companies think they’re running. If your product is a pipe, your value is in the data you hold and the integrations you support. That’s a game where switching costs matter more than product quality.

The fortress position is worse. It delays the inevitable while annoying customers. Export fees and API restrictions aren’t a moat; they’re a countdown timer.

The brain position is the hardest to execute but the most durable. It requires the company to actually understand the domain well enough to embed useful intelligence. Not just wrap an LLM around the UI, but develop opinions about how work should flow. Linear can do this because they’ve been opinionated about the process of building software since their inception. The agent is an extension of that product philosophy, not a bolted-on feature.

What this means

I think we’re early in a sorting process. Over the next year or two, every SaaS product will end up in one of these three positions, and the market will price them accordingly.

The interesting question isn’t whether AI agents will interact with SaaS data. That’s already happening. The question is where the intelligence lives. If it lives outside the product, the product is a pipe. If it lives inside, the product has a shot at becoming more valuable, not less.

For the products I depend on in my own workflow, I’m increasingly paying attention to which ones are building brains and which ones are just installing pipes.